ETL Process Optimization: Strategies for High-Performance Data Pipelines

In today’s data-driven landscape, businesses rely on efficient ETL pipelines to transform raw data into actionable insights. ETL process optimization ensures that organizations can handle growing data volumes, reduce latency, and maintain data quality while minimizing resource consumption. Optimized ETL workflows improve decision-making speed, reduce operational costs, and enable scalable analytics.

This guide explores the principles, strategies, tools, and best practices for ETL process optimization, providing actionable insights for data engineers, IT leaders, and analytics professionals seeking to maximize the performance and reliability of their ETL pipelines.

Understanding ETL Process Optimization

At its core, ETL process optimization focuses on refining the Extract, Transform, Load workflows to maximize efficiency. Optimizing ETL pipelines involves reducing processing time, minimizing errors, and ensuring consistent data delivery to downstream systems.

Efficiency improvements can range from redesigning transformation logic to implementing parallel processing. The ultimate goal is to create an ETL process that scales seamlessly with increasing data volume while preserving accuracy and reliability.

Key Components of ETL Workflows

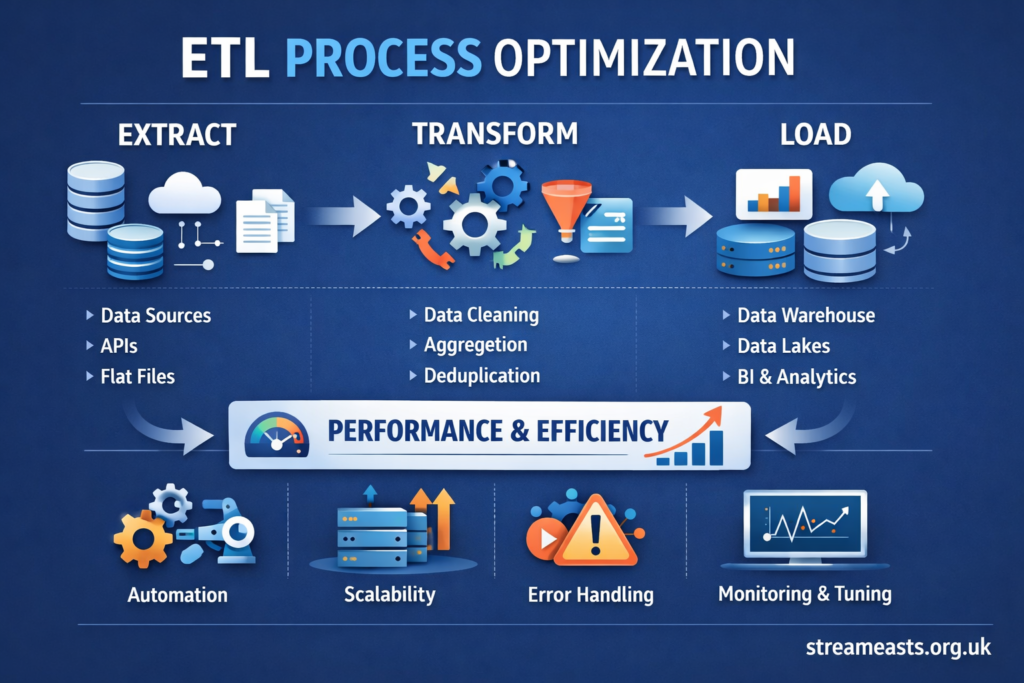

ETL pipelines consist of three primary stages: extraction, transformation, and loading. Each stage offers unique opportunities for optimization:

- Extraction focuses on retrieving data from source systems efficiently, avoiding bottlenecks caused by slow queries or API limitations.

- Transformation involves cleaning, normalizing, and aggregating data; optimizing this stage can reduce unnecessary computations and improve downstream analytics.

- Loading ensures that the processed data reaches the target database or data warehouse reliably, where performance tuning can prevent insert/update delays.

Optimizing these components requires understanding data dependencies, volumes, and the architecture of both source and target systems.

Strategies for ETL Process Optimization

There are multiple strategies to enhance ETL performance. Key approaches include incremental loading, partitioning data, and leveraging parallel processing.

Incremental loading reduces processing by only handling new or modified records rather than the entire dataset. Partitioning divides large datasets into smaller chunks for faster processing. Parallel execution allows multiple ETL tasks to run simultaneously, dramatically cutting overall runtime.

Selecting the right combination of strategies depends on data characteristics, system architecture, and organizational goals.

Data Quality and Integrity in Optimization

Optimizing ETL processes must not compromise data quality. Implementing automated data validation checks ensures that data integrity is preserved while reducing manual intervention.

Techniques such as constraint enforcement, schema validation, and duplicate detection can be integrated directly into ETL pipelines. Maintaining high-quality data enhances analytics accuracy and protects against costly errors.

Leveraging ETL Tools for Performance

Modern ETL platforms offer built-in optimization features, including caching, pre-aggregation, and workflow orchestration. Tools like Apache NiFi, Talend, and Informatica provide frameworks for parallel execution, error handling, and monitoring.

Choosing the right ETL tool depends on business requirements, data volume, and integration capabilities. Proper configuration and tuning are essential to fully realize the performance benefits of these tools.

Parallelism and Load Balancing Techniques

Parallelism in ETL involves executing multiple processes simultaneously to reduce overall runtime. This can include multi-threaded transformations, distributed processing frameworks, and workload partitioning.

Load balancing distributes ETL tasks across multiple nodes or servers, preventing bottlenecks. By carefully designing parallel workflows, organizations can achieve significant improvements in pipeline efficiency.

Incremental vs. Full Load Optimization

One critical decision in ETL process optimization is choosing between full data loads and incremental updates. Full loads are simpler but resource-intensive, often reprocessing unchanged data. Incremental loads are more efficient but require tracking mechanisms to identify changed records.

The table below compares these approaches:

| Load Type | Advantages | Disadvantages | Best Use Cases |

| Full Load | Simplicity, full refresh | High resource consumption, longer runtime | Small datasets, infrequent updates |

| Incremental Load | Efficiency, reduced runtime | Complex logic, requires change tracking | Large datasets, frequent updates |

| Hybrid Load | Balance of both | Moderate complexity | Medium-to-large datasets with periodic full refreshes |

Selecting the appropriate load strategy is essential for sustainable ETL performance.

Automation and Scheduling Optimization

Automating ETL workflows reduces manual errors and ensures timely data availability. Scheduling jobs during off-peak hours can improve system performance by reducing resource contention.

Modern ETL schedulers also support dependency management and dynamic triggers, allowing pipelines to respond to data changes in real-time or near real-time.

Monitoring and Performance Metrics

Continuous monitoring is vital for maintaining optimized ETL pipelines. Key performance metrics include execution time, throughput, error rates, and resource utilization.

By analyzing these metrics, data teams can identify bottlenecks, predict failures, and proactively adjust workflows. Monitoring also enables benchmarking improvements over time.

Cloud-Based ETL Optimization

Cloud-based ETL solutions allow dynamic scaling, reducing infrastructure limitations. Features like serverless architecture and on-demand resource provisioning improve pipeline efficiency and cost-effectiveness.

Cloud platforms also offer integrated monitoring, automated failover, and parallel processing capabilities, which can be leveraged to optimize ETL processes at scale.

ETL Process Optimization Case Studies

Real-world examples highlight the impact of ETL process optimization. Organizations adopting parallel execution, incremental loading, and cloud scaling reported reductions in processing time by up to 70% while maintaining data accuracy.

These case studies illustrate that careful planning, combined with technology, can transform ETL pipelines from operational bottlenecks into strategic assets.

Common Challenges in Optimization

Despite its benefits, ETL optimization comes with challenges. Complex transformations, legacy systems, and inconsistent data can hinder efficiency gains.

Addressing these challenges requires thorough profiling, careful pipeline design, and the adoption of robust tools capable of handling variability and scale.

ETL Best Practices for Long-Term Success

Sustainable ETL optimization involves continuous improvement. Best practices include maintaining clear documentation, implementing automated testing, and periodically reviewing performance.

Investing in these practices ensures pipelines remain reliable, adaptable, and aligned with evolving business needs.

Expert Perspective Quote

“Optimizing ETL isn’t just about speed; it’s about reliability, scalability, and actionable insights,” says a senior data engineer. “Efficient pipelines turn raw data into a strategic advantage.”

This perspective reinforces the importance of holistic optimization beyond mere runtime improvements.

Future Trends in ETL Optimization

Emerging trends include AI-driven optimization, real-time ETL, and hybrid cloud pipelines. Leveraging these trends can further reduce latency, increase data accuracy, and improve decision-making agility.

Organizations that proactively adopt these innovations are likely to maintain a competitive edge in data operations.

Conclusion

ETL process optimization is a critical strategy for any data-driven organization. By combining technology, strategy, and continuous monitoring, businesses can improve data pipeline performance, enhance data quality, and scale efficiently with growing data volumes.

Adopting best practices such as incremental loading, parallel execution, and cloud integration ensures pipelines remain robust, cost-effective, and future-ready.

FAQ

What is ETL process optimization?

ETL process optimization refers to refining ETL pipelines to improve efficiency, reduce runtime, maintain data quality, and ensure scalable data processing.

Why is ETL process optimization important?

Optimized ETL pipelines enable faster analytics, lower operational costs, and higher data reliability, supporting better business decisions.

How can incremental loading improve ETL efficiency?

Incremental loading processes only new or changed records, reducing runtime and resource consumption compared to full loads.

Which tools support ETL process optimization?

Tools like Apache NiFi, Talend, Informatica, and cloud-native platforms provide features like parallel execution, caching, and workflow automation to optimize ETL.

What metrics indicate optimized ETL performance?

Key metrics include execution time, throughput, error rates, and resource utilization, which help identify bottlenecks and validate improvements.